You’ve probably heard of facial microexpressions — the idea is that from them we can extract valuable information about how a person is thinking, feeling, and what they plan to do.

Recent innovations in computer vision and deep learning algorithms have led to a flood of models that can be used to extract facial landmarks, action units, and facial microexpressions with speed and precision.

Today we’ll explore one of these solutions in Python.

What Are They?

Facial microexpressions are brief and involuntary facial expressions. They are generally very fast and can be difficult to detect. They are an important part of non-verbal communication and can be used to help identify what a person is feeling or thinking, even when they are trying to hide it.

For example, a person may try to hide their fear with a calm and controlled expression: but a facial microexpression of fear may appear for a brief moment, revealing what the person really feels.

Installing and Using Py-Feat

First, what is Py-Feat?

Py-Feat: “Python Facial Expression Analysis Toolbox”. According to its creators:

“Py-Feat provides a comprehensive set of tools and models to easily detect facial microexpressions (action units, emotions, facial landmarks) from images and videos, preprocess and analyze this data, and also visualize it.”

Let’s install Py-Feat in our environment with the following command:

|

|

After installation, we can create a detector like this:

|

|

Now we can start our analyses, and just for fun I’ll try to use the most absurd images possible!

First image…

To load images and perform our analysis, just use the following command:

|

|

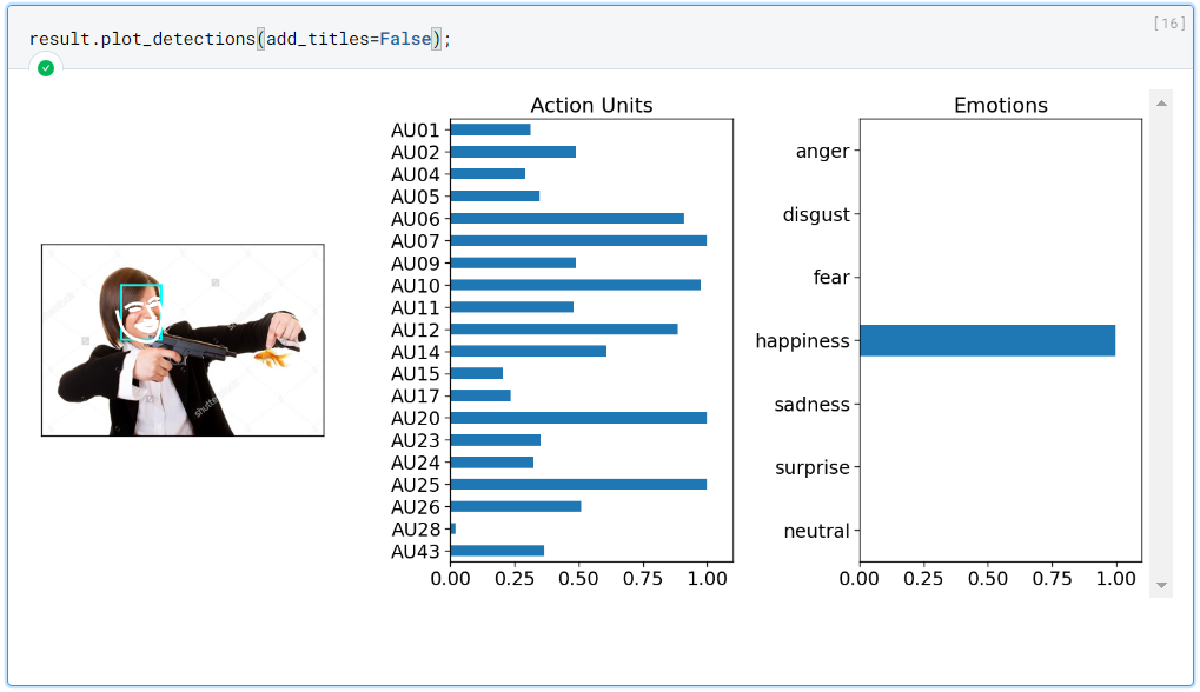

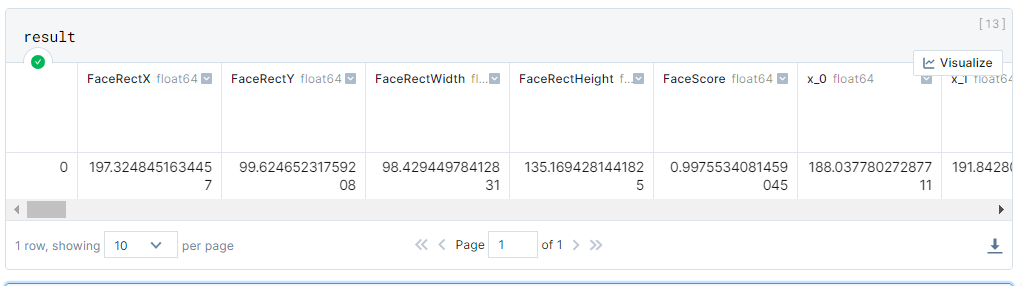

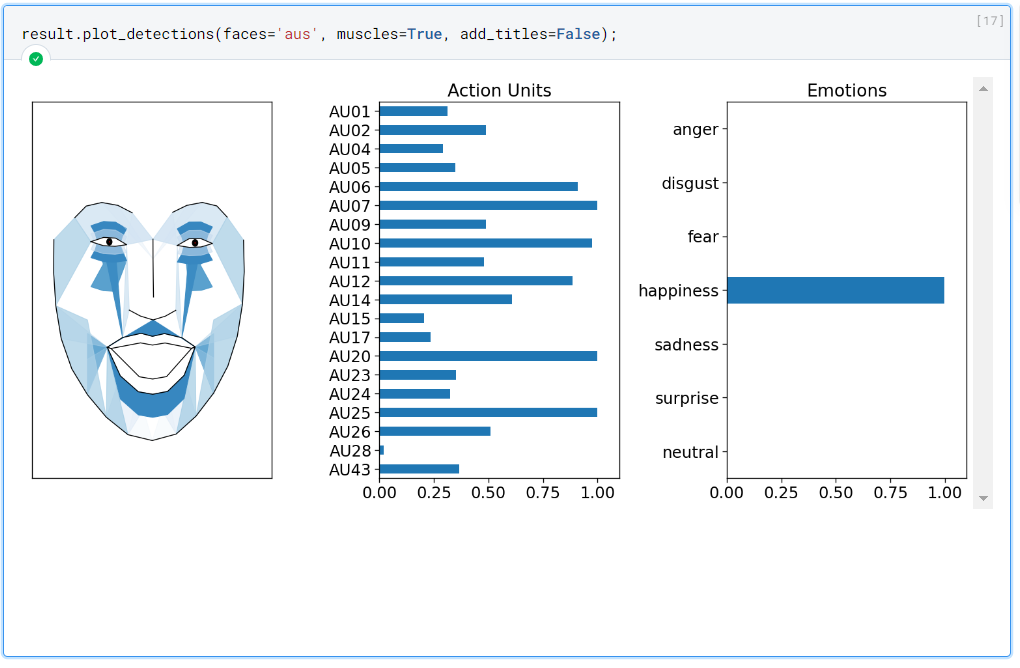

The result is an object of type “feat.data.Fex”, but don’t be alarmed — it’s basically a Pandas DataFrame with some extra methods. Notice below that this DataFrame stores some information extracted from our image.

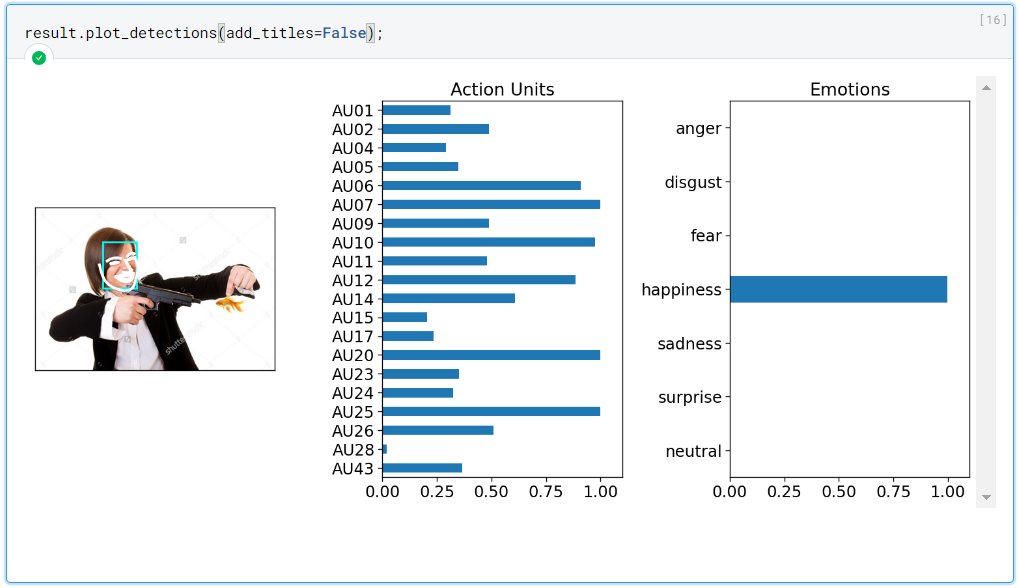

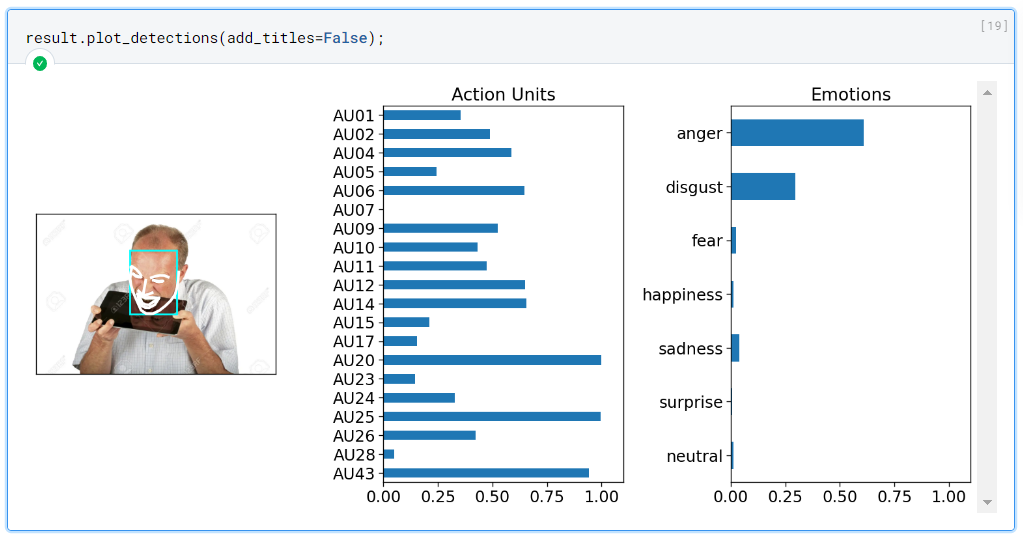

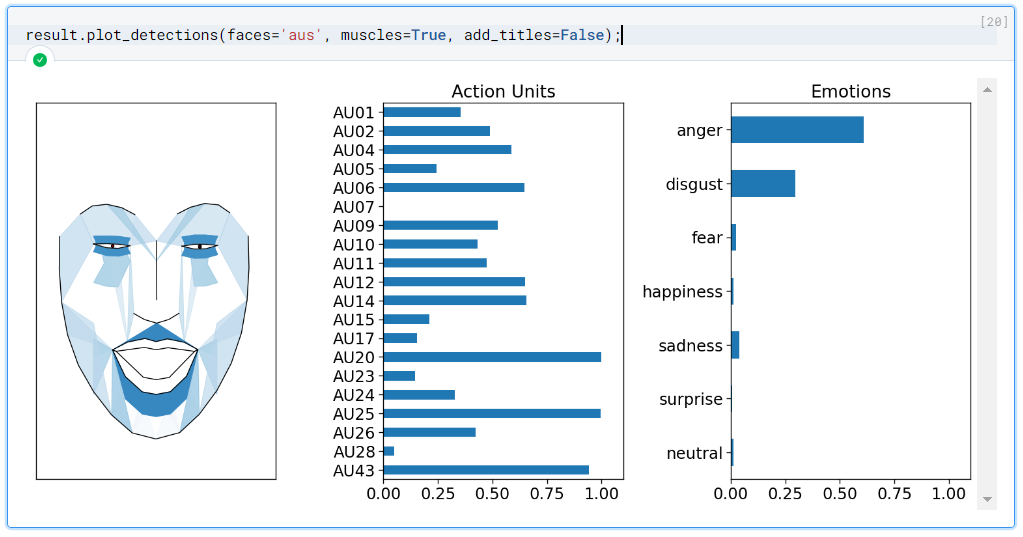

Our result object has a method called “plot_detections” that we can use to better visualize its information. See some examples below.

Truly incredible. In addition to correctly detecting the emotion present in the image — in this case “happiness” — Py-Feat also gives us this amazing visualization of which sets of facial muscles are being activated to compose that expression.

Let’s do one more test with a different image containing a relatively more complex emotion.

Using the image above we get the following result:

Incredible — another success. Notice the following points:

- The face was only partially visible in the image, and yet Py-Feat was able to detect and classify it correctly;

- The face doesn’t contain just one emotion, but two main ones: anger and disgust;

Pseudoscience?

We saw above that Py-Feat is an incredible tool for detecting facial microexpressions, but should you trust it?

Although this theory is today treated in common sense as absolute truth (thanks to some YouTube channels and fiction series), the scientific community has not yet reached a consensus on the theory of “universal facial microexpressions”. For those interested in the topic, I recommend watching the video below from the channel “Física e Afins”.

Conclusion

What do you think — are facial microexpressions pseudoscience or even outdated science?

Please tell me your opinion below! What did you think of the analysis? Would you have done something more or different?