If you’re developing with AI in 2026 and still haven’t mastered prompting techniques, you’re leaving 70% of LLM capability on the table. I’m not exaggerating — the difference between a well-structured prompt and a generic one can be the difference between a mediocre response and a production-level solution.

After working on various projects involving LLMs, I’ve noticed that most developers underestimate the power of a good prompt. Let’s change that today.

Why Does Prompt Engineering Matter?

Before we dive into the techniques, understand this: LLMs are pattern-completion machines. They don’t “understand” in the human sense — they’re extraordinarily good at continuing the pattern you started. That’s why how you start that pattern (your prompt) completely determines the quality of the response.

Think of it like declarative programming: you don’t say how to do it, but describe what you want so clearly that the model can infer the correct path.

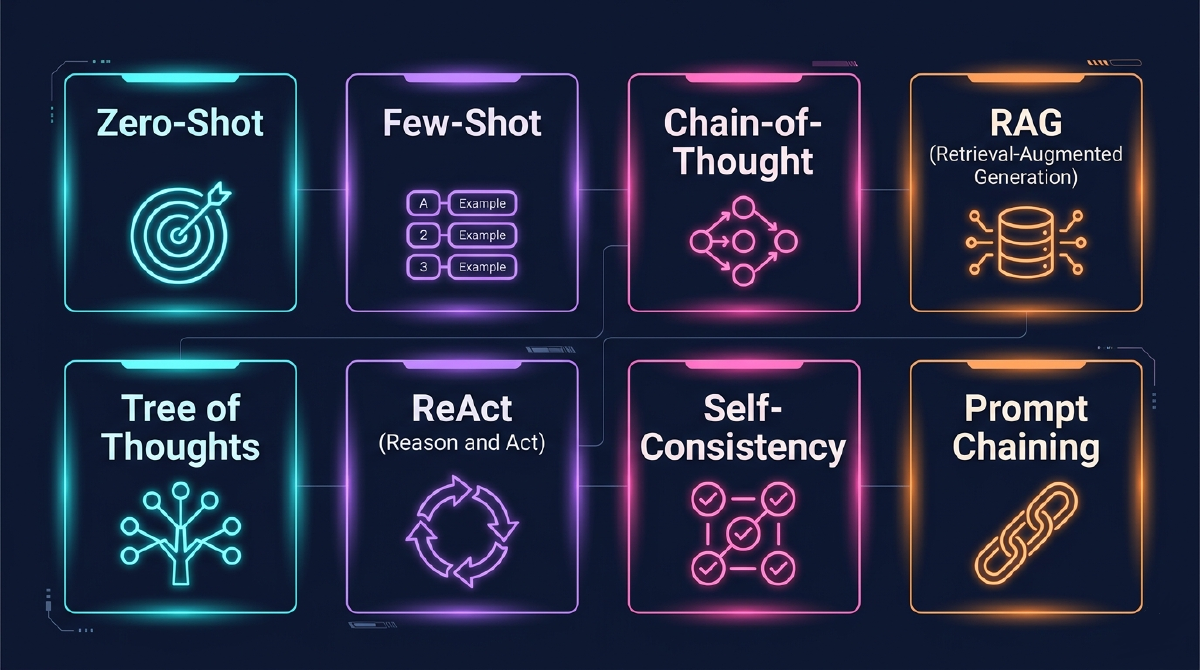

1. Zero-Shot Prompting: The Foundation

What it is: You simply ask for what you want, without prior examples.

|

|

When to use: This technique works well for simple, straightforward tasks, especially with large, modern models like GPT-5+ and Claude 4.5+.

Avoid using it on complex problems or those requiring a specific output format.

Pro tip: Add role context (role prompting):

|

|

This works 3x better than the basic prompt.

2. Few-Shot Prompting: Teach by Example

What it is: As the name says, provide examples before asking for the real task.

|

|

Why it works: You’re establishing the exact pattern you want.

The model learns:

- The output format (JSON)

- The level of granularity

- How to handle mixed aspects

How many examples to give?: About 2-3 for large models and 5-8 for smaller models. Remember, more examples ≠ automatically better (can cause confusion).

3. Chain-of-Thought (CoT): The Technique That Changes Everything

This is, without a doubt, the most impactful technique I’ve discovered. CoT teaches the model to “think out loud.”

Poor prompt:

|

|

Prompt with CoT:

|

|

Result:

|

|

When to use CoT: This technique shines in mathematical or logical reasoning, code debugging, complex requirements analysis, and in general, any task that benefits from decomposition into smaller steps.

Zero-Shot CoT (the simplest and most powerful version):

Simply add at the end: "Let's think step by step"

Seriously. This magic phrase increases accuracy in reasoning problems by up to 40%.

4. Self-Consistency: Multiple Perspectives

The concept: Generate multiple responses with CoT and choose the most common one.

Example in Python (with OpenAI API):

|

|

Trade-off: 5x more API calls, but drastically more reliable for critical decisions.

Real use case: Business rule validation, structured data extraction from contracts, compliance analysis.

5. Generate Knowledge Prompting: Context Before the Question

Make the model generate relevant knowledge before answering.

Naive approach:

|

|

With Knowledge Generation:

|

|

The second response is significantly more accurate and detailed because the model “activated” the relevant context.

Practical use in development:

|

|

6. Prompt Chaining: Decompose Complex Tasks

Problem: A single prompt trying to do everything results in superficial responses, context loss, and debugging difficulty. The solution is to use a chain of specialized prompts, where each has a clear responsibility.

Example — Code Review Analysis System:

|

|

Advantages: Each step is individually verifiable, it’s easy to identify where something went wrong, you can cache intermediate steps to save API calls, and it also allows parallelization when steps are independent.

7. Tree of Thoughts (ToT): Solution Exploration

ToT is like CoT, but with multiple reasoning paths that are evaluated.

|

|

When to use: Ideal for architectural decisions, problems with multiple valid solutions, and especially when the cost of choosing the wrong implementation is high.

Simple implementation:

|

|

8. ReAct: Reasoning + Action

ReAct combines thinking and action in iterative cycles. It’s the foundation of tools like LangChain Agents.

Pattern:

|

|

Real example — API Debugging:

|

|

9. RAG (Retrieval Augmented Generation): External Knowledge

The fundamental problem with LLMs: Outdated knowledge limited to training data.

RAG solves this:

- Retrieve: Search for relevant information in your database/documents

- Augment: Inject that information into the context

- Generate: Model responds based on the augmented context

Example — Support System:

|

|

Tips for effective RAG:

- Always instruct to cite sources

- Instruct to admit when it doesn’t know

- Use semantic embedding for search (not just keywords)

- Test different

top_kvalues (3-7 is usually ideal)

10. Meta Prompting: The Model Creates Its Own Prompts

At the most advanced level: You use the LLM to create better prompts.

|

|

Result: A prompt often better than what you’d write manually.

Automatic iteration:

|

|

Technique Comparison: When to Use Each One

| Technique | Complexity | Cost (API) | Best For | Avoid For |

|---|---|---|---|---|

| Zero-Shot | Low | Low | Simple tasks, large models | Specialized tasks |

| Few-Shot | Medium | Medium | Specific formatting, structured extraction | When examples are hard to create |

| Chain-of-Thought | Medium | Medium | Reasoning, math, logic | Purely creative tasks |

| Self-Consistency | High | High (5x) | Critical decisions, high precision needed | Simple tasks, subjective responses |

| Knowledge Gen | Medium | Medium | Questions needing technical context | Direct facts |

| Prompt Chaining | High | High | Complex pipelines, multi-step processes | Atomic tasks |

| Tree of Thoughts | Very High | Very High | Architectural decisions, multiple solutions | Problems with obvious solutions |

| ReAct | High | High | Agents, automation, debugging | Simple questions |

| RAG | High | Medium-High | Domain-specific knowledge | When model knowledge already suffices |

| Meta Prompting | Very High | High | System optimization, production | Rapid prototyping |

Universal Principles: What Always Works

Regardless of technique:

1. Be Specific

❌ “Improve this code”

✅ “Refactor this function to reduce cyclomatic complexity below 10, maintaining the same public interface”

2. Provide Context

Always include:

- Role: “You are a senior data engineer”

- Objective: “The goal is to migrate this pipeline without downtime”

- Constraints: “We cannot change the database schema”

3. Define the Format

|

|

4. Show, Don’t Just Tell

Instead of “use best practices,” show:

|

|

5. Iterate Based on Evidence

- Test different temperatures (0.1 for consistency, 0.8 for creativity)

- A/B test prompt variations

- Measure objectively (don’t rely on intuition)

Conclusion: From Knowledge to Mastery

Knowing these techniques is the first step. Mastery comes from applying the right technique at the right moment.

My recommendation for getting started:

- Week 1: Master Zero-Shot and Few-Shot in your real use cases

- Week 2: Add Chain-of-Thought whenever there’s reasoning involved

- Week 3: Implement Prompt Chaining for your most complex pipeline

- Week 4: Experiment with RAG if you have a knowledge base

After that, you’ll be in the top 10% of developers using AI.

And remember: LLMs are tools, not magic. They amplify your expertise, but don’t replace deep understanding of the problem domain.