On August 9, 2022, Dagster finally announced the release of its version 1.0, indicating that the orchestrator is finally production-ready. But what is Dagster? According to them:

“Build and deploy data pipelines with extraordinary speed. The cloud-native orchestrator for the entire development lifecycle, with built-in lineage and observability, a declarative programming model, and best-in-class testability.”

Sounds promising. Let’s run some tests with a simple example. Say we have the following pipeline:

|

|

To implement this in Dagster, we need to make the following changes:

|

|

Dagster comes with a graphical interface. To access it, type the following command in your terminal:

|

|

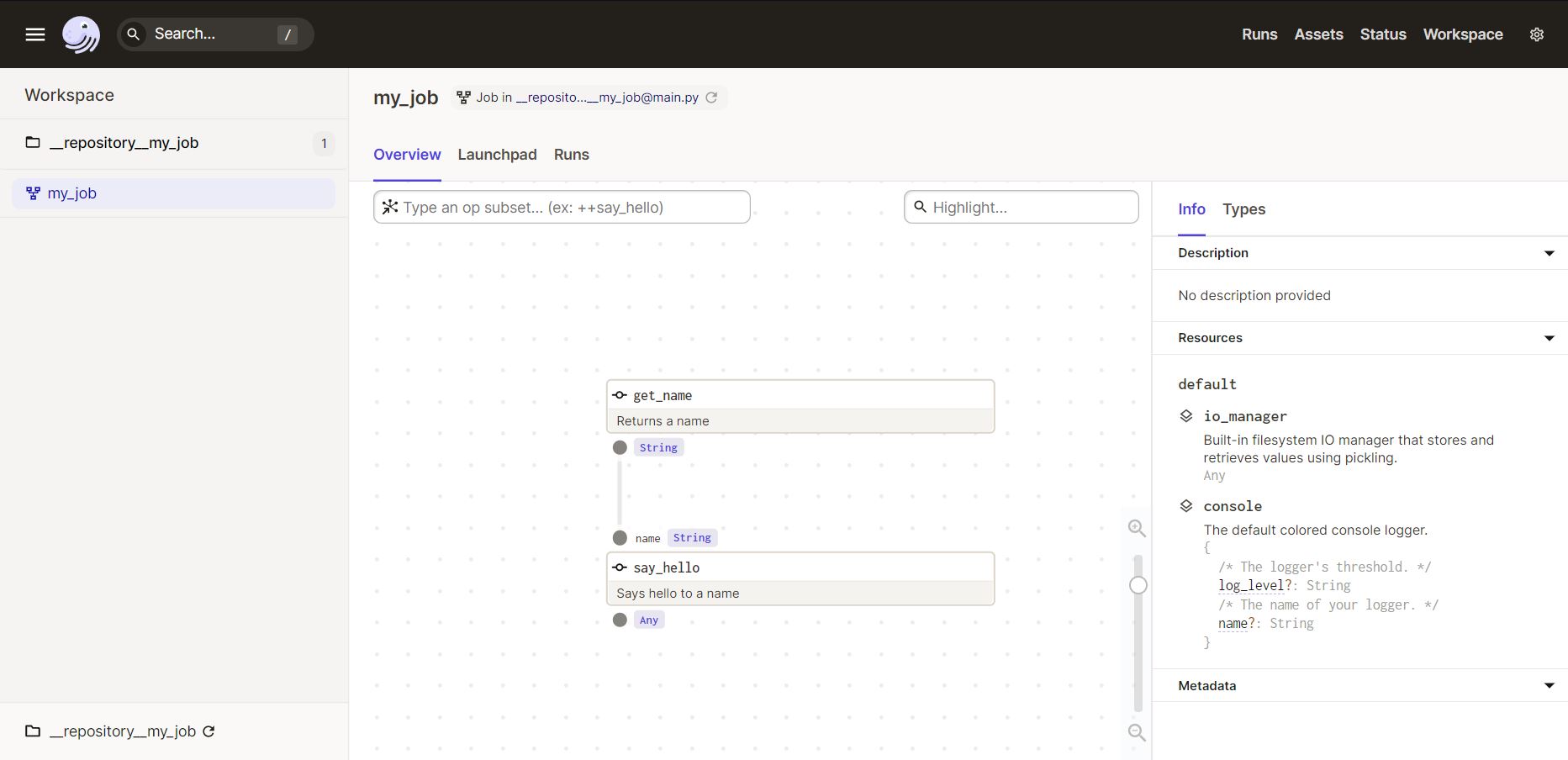

Now you can access [http://127.0.0.1:3000] in your browser, where you’ll see the following:

Here we can visualize the entire flow of our pipeline, execute it, and view the documentation automatically generated based on docstrings and type hints.

That’s it. With just a few lines of code, we got our pipeline running in Dagster.

To execute our pipeline, just click “Launchpad” (the second item in the bottom menu) and then “Launch Run” in the bottom right corner.

Done — without needing to explicitly define the execution order of the DAGs, Dagster is able to build the entire flow of our pipeline based on the code contained in our job definition (@job). Inside our operations (@op), we can have any arbitrary Python code.

But how would we schedule our executions? Just do the following:

|

|

To work with schedulers, we also need to start the Dagster Daemon, which is responsible for managing our schedulers, execution queue, and several other features. To do this, just run the following command:

|

|

This is one of the simplest ways to define a Scheduler, but the Dagster interface allows various optional parameters that let you configure the entire execution.

Dagster solves many of the problems currently found in Airflow, such as:

-

Testability: It’s absurdly easy to develop unit tests and also differentiate between different environments (e.g., Development and Production).

-

Organization: One of the biggest problems with Airflow in my opinion is the lack of an easy and intuitive way to organize your code — all your DAGs end up “together,” making visibility difficult. Dagster, in contrast, allows you to organize your code in different repositories (as they’re called) and also provides the incredible ability to have multiple different sources of your code, with different Python versions and libraries.

-

Scalability: Dagster includes a series of very complete tutorials on how to deploy to production using Docker Compose, Kubernetes, AWS ECS, among others.

-

Dagster also has a concept called Software Defined Assets (assets defined via software), which is incredible. I won’t cover it here because it’s a topic that deserves its own article, but I definitely recommend researching it.

Dagster has everything it takes to become the new orchestrator of the modern data stack — now it’s just a matter of seeing how the market and the community will embrace it.